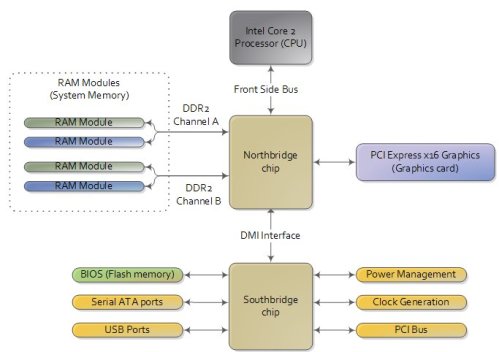

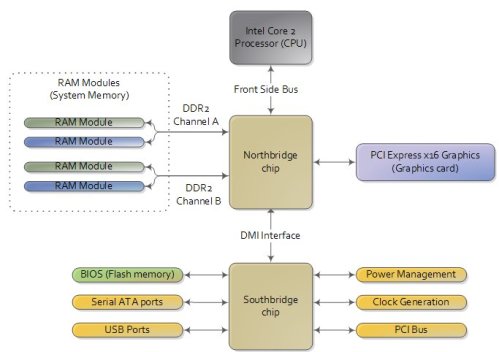

To start off let’s take a look at how an computer is wired up nowadays:

There are three main ways by which the CPU and the outside communicate: memory address space, I/O address space, and interrupts.

In a motherboard the CPU’s gateway to the world is the front-side bus connecting it to the northbridge. Whenever the CPU needs to read or write memory it does so via this bus. It uses some pins to transmit the physical memory address it wants to write or read, while other pins send the value to be written or receive the value being read.

Now comes the rub. We’re used to thinking of memory only in terms of RAM, the stuff programs read from and write to all the time. And indeed most of the memory requests from the processor are routed to RAM modules by the northbridge. But not all of them. Physical memory addresses are also used for communication with assorted devices on the motherboard.These devices include video cards, most PCI cards (say, a scanner or SCSI card), and also the flash memory that stores the BIOS

When the northbridge receives a physical memory request it decides where to route it: should it go to RAM? Video card maybe? This routing is decided via the memory address map. For each region of physical memory addresses, the memory map knows the device that owns that region. The bulk of the addresses are mapped to RAM, but when they aren’t the memory map tells the chipset which device should service requests for those addresses. This mapping of memory addresses away from RAM modules causes the classic hole in PC memory between 640KB and 1MB. A bigger hole arises when memory addresses are reserved for video cards and PCI devices. This is why 32-bit OSes have problems using 5 Gigs of RAM. In Linux the file /proc/iomem neatly lists these address range mappings. The diagram below shows a typical memory map for the first 4 gigs of physical memory addresses in a PC.

Actual addresses and ranges depend on the specific motherboard and devices present in the computer, but most Core 2 systems are pretty close to the above. All of the brown regions are mapped away from RAM. Remember that these are physical addresses that are used on the motherboard buses. Inside the CPU (for example, in the programs we run and write), the memory addresses are logical and they must be translated by the CPU into a physical address before memory is accessed on the bus.

The rules for translation of logical addresses into physical addresses are complex and they depend on the mode in which the CPU is running (real mode, 32-bit protected mode, and 64-bit protected mode). Regardless of the translation mechanism, the CPU mode determines how much physical memory can be accessed. For example, if the CPU is running in 32-bit mode, then it is only capable of physically addressing 4 GB (well, there is an exception called physical address extension, but ignore it for now). Since the top 1 GB or so of physical addresses are mapped to motherboard devices the CPU can effectively use only ~3 GB of RAM (sometimes less – I have a Vista machine where only 2.4 GB are usable). If the CPU is in real mode, then it can only address 1 megabyte of physical RAM (this is the only mode early Intel processors were capable of). On the other hand, a CPU running in 64-bit mode can physically access 64GB (few chipsets support that much RAM though). In 64-bit mode it is possible to use physical addresses above the total RAM in the system to access the RAM regions that correspond to physical addresses stolen by motherboard devices. This is called reclaiming memory and it’s done with help from the chipset.

That’s all the memory we need for the next post, which describes the boot process from power up until the boot loader is about to jump into the kernel. If you’d like to learn more about this stuff, I highly recommend the Intel manuals. I’m big into primary sources overall, but the Intel manuals in particular are well written and accurate.

Read Full Post »